Sensor Fusion - Aerodynamic Decelerator Systems Laboratory

Sensor Fusion Home

This research was fulfilled in parallel to the Video Scoring project. They both pursued the same goal of estimating the position and orientation of parachute recovery system (PRS) during the entire aidrop, from exiting an aircraft until impact, but using different sources of data. This specific project was relying entirely on data provided by an onboard inertial measurement unit (IMU). The reasoning for using IMU only is as follows.

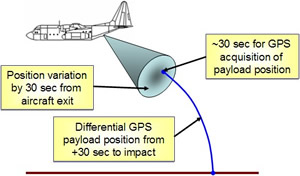

The optical ground stations or KTMs (discussed on the Video Scoring project page) have a limitation on the number of test articles they can track simultaneously (only one PRS at a time if high-resolution imagery is required). Hence, they cannot be used in a mass airdrop. Besides, they have significant manpower requirement, require days to process data (manually), are cost prohibitive to many testing programs, and are a limited material resource. That is why for real-time operational trajectories, Precision Guided PRS (PGPRS) utilize onboard GPS receivers. However, those GPS receivers require take approximately 30 seconds after aircraft exit to produce a solution (Fig.A) and are susceptible to GPS jamming (if available at all).

Figure A. Acquiring GPS position.

The outcome of this project was the development of a Payload Derived Position Acquisition System (PDPAS). The PDPAS is the instrumentation set and software algorithm that could be installed onto PRS. The PDPAS is supposed to generate PRS trajectories in real time for testing and operational use. The trajectory generation is supposed to be done without the required use of GPS and produce a six-degree-of-freedom (6DoF) solution for the PRS’s trajectory from aircraft exit to ground impact.

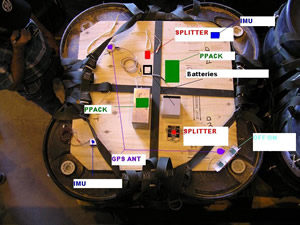

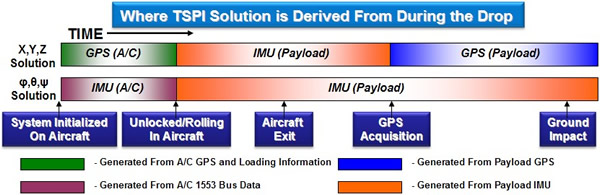

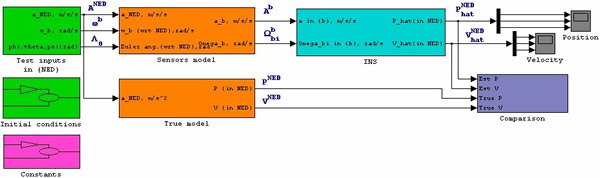

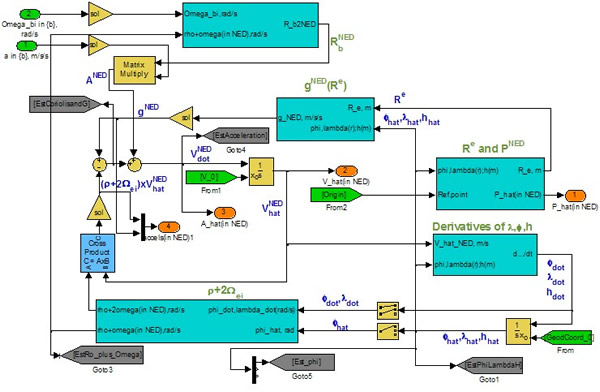

Figure B shows the hardware setup on top of payload. In this set up, the GPS receiver was still used to compare the true data (available after 30 seconds past release) against the estimates provided by PDPAS. Figure C depicts the rigged system before being deployed out of C-130 aircraft. An overall sequencing of the events before and after the PRS release is shown in Fig.D, and Fig.E presents the developed Simulink model that processes IMU data. Obviously, PDPAS uses the strapdown IMU mechanization (Fig.F).

|

|

| Figure B. The GPS/IMU set atop a payload. | Figure C. The PRS in the cargo bay. |

Figure D. Three stages of the pose estimation.

Figure E. Simulink model for processing IMU data.

Figure F. Strapdown mechanization of PDPAS.

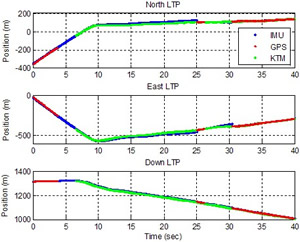

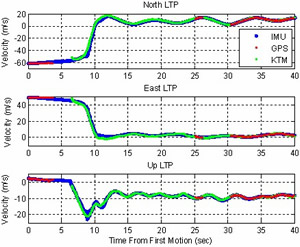

a) |

b) |  |

Figure G. Comparison of INS position estimation (a) and the corresponding errors (b).

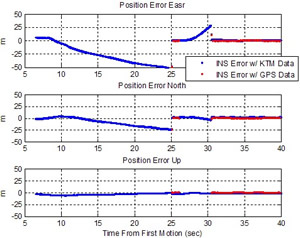

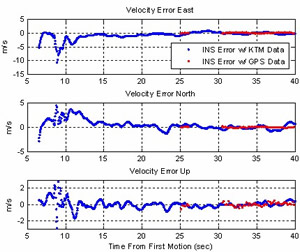

a) |

b) |  |

Figure H. Comparison of INS velocity estimation (a) and the corresponding errors (b).

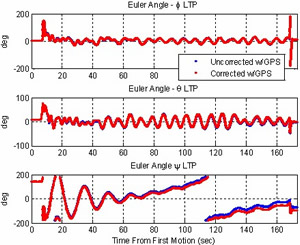

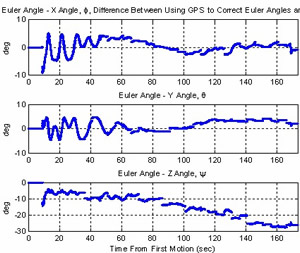

a) |

b) |  |

Figure I. Comparison of INS Euler angles estimation (a) and the corresponding errors (b).

We expect to experiment with more accurate IMU sensors and perform some tradeoff studies to see the cost of achieving an ultimate goal of having IMU-only PDPAS. Further developments of this research might also include substitution the INS with a swarm of low-cost miniaturized MEMS accelerometers placed at different locations of the payload to allow computation of both linear and angular accelerations.

Publications

In addition to the papers on sensor fusion, written by NPS students and faculty members and listed on the ADSC Publications page, below you will find some earlier papers on using a swarm of low-cost miniaturized MEMS accelerometers to estimate vehicle's attitude (the extension of this research project might be dealing with):

- Zilli, S., Frezza, R., Beghi, A., “Model Based GPS/INS Integration for High Accuracy Land Vehicle Applications: Calibration of a Swarm of MEMS Sensors,” Proceedings of the IEEE/ASME International Conference on Advanced Intelligent Mechatronics, Monterey, CA, July 24-28, 2005, pp.952-956.

- Spagnol, C., Muradore, R., Assom, M., Beghi, A., and Frezza, R., “Trajectory Reconstruction by Integration of GPS and a Swarm of MEMS Accelerometers: Model and Analysis of Observability,” Proceedings of the IEEE IEEE Intelligent Transportation Systems Conference, Washington, D.C., October 3-4, 2004.

- Nagel, D.J., “Microsensor Clusters,” Microelectronics Journal, vol.33, 2002, pp.107-119.